Category: Standard SQL

Column Order in SQL Tables

Read The language rules we know – but don’t know we know which says “Adjectives in English absolutely have to be in this order: opinion-size-age-shape-colour-origin-material-purpose Noun.” Then you’ll know this is correct:

CREATE TABLE little_girls (

grade FLOAT, height SMALLINT, birth DATE, fatness BLOB,

hair SET('blond','black'), previous_school VARCHAR(64),

is_made_of_sugar_and_spice_and_everything_nice BOOL,

reason_for_existence TEXT DEFAULT 'thank heaven');

because columns are attributes and so are adjectives.

Now I’ll tell you the other factors that affect whether chicken columns precede egg columns.

Primary key columns first

As the Oracle “Ask Tom” guy said unenthusiastically in 2012: “For example – most people – pretty much all people – would put the primary key FIRST in the table. It is a universally accepted approach – convention.” Sometimes this is part of a “rule of thumb”. Sometimes it’s part of “logical data modeling standards”. Sometimes it’s part of “business rules”.

I’ve even seen an “automated analysis of database design” tool that marks you down if you put the primary key somewhere else.

General before specific

The United States Postal Service says “Automated mail processing machines read addresses on mailpieces from the bottom up and will first look for a city, state, and ZIP Code.” because those folks know that what matters first is the larger area and what matters last is the street spot.

That’s also what people care about so often they’ll say

genus before species,

or schema name before object name,

or test type before test result.

Specificity is not the same as uniqueness — there might be more addresses “100 Main Street” then there are states in the USA. But it is about moving from the target to the bullseye. Unique-first might be an advantage for CREATE INDEX, but not for CREATE TABLE. And that might mean you might want to say CREATE TABLE t (column1, column2 PRIMARY KEY (column2, column1)) but it does not mean you want to reverse the order of display.

Surname first

Looking at the States stats again, there are about 5 million occurrences of the most common given name (James) and about 2.5 million occurrences of the most common last name (Smith). That’s anecdotal but corresponds to the general belief that last names are uniquer than first names. What matters more is that the name James is less useful for identification, and therefore less important.

Alphabetical

If you’ve ever heard “Choose one from column A and one from Column B” you’ll feel this isn’t quite absurd, and if you’ve got 26 columns named column_a through column_z then you’ll appreciate it if there’s an arbitrary-but-familiar way to glance through them.

And in fact it’s not arbitrary if your column names have prefixes that indicate what group they belong to. Surely user_name and user_address belong together, or if it’s the address suffix that matters more then alphabetical DESC would work though I’ve never seen it proposed.

Important before trivial

There’s advice that columns should appear in order of importance, and if users don’t know what’s important then there are clues.

Is the value usually the same or usually NULL? Then it carries little information.

Is the column name absent from most SELECT statements? Then most people don’t care.

Is it indexed, or unique, or in a foreign key? Then somebody has already decided it matters. Accept that and bump them closer to the start.

What matters for SELECT * is:

the columns that you like most should be on the left of the screen (even if you’re Arab) so you don’t need to use horizontal scrolling.

What matters for SELECT column_x is:

if the row is so big that it might stretch over two blocks, the DBMS might be happier if the column is in the first block.

The ancient wisdom

There’s a rule that I’ve seen often, with occasional variations:

“Primary key columns first.

Foreign key columns next.

Frequently searched columns next.

Frequently updated columns later.

Nullable columns last.

Least used nullable columns after more frequently used nullable columns.

Blobs in own table with few other columns.”

What I’ve seen much less often is a link to what appears to be the first occurrence: https://community.hpe.com/t5/operating-system-hp-ux/oracle-columns/td-p/2840825. It’s from 2002 and it’s in response to a question about Oracle.

So, for example, if your DBMS happens to be one that doesn’t store NULLs if they’re the last things in the row, then that’s good advice. If on the other hand your DBMS happens to be one that has a preface of bits indicating whether the column is NULL, then it’s pointless. Documentation about this might for one InnoDB case be slightly misleading; however, my point is that most of those items will depend on your DBMS and the clauses that you use for a storage engine.

Nevertheless I’ll endorse the “NULLs last” tip because I’ve seen an old claim that it affects SQL Server too. That is, if it’s been a rule for more than one DBMS, even if it’s pointless, maybe it affects compatibility because others still think it’s real.

Old SQL Server rules, for what they’re worth, also include “fixed fields first” and “short before long” but they’re obsolete. Also if you’ve got InnoDB’s compact format then lengths of variable columns are in the header. So when you see claims that you have to journey through the varchar columns till you reach the char columns, be skeptical.

Autocompletion

Suppose in the past you said

CREATE TABLE n (s1 INT, s2 INT);

and now (after rehash) you start an INSERT statement:

INSERT INTO n (

at this point the ocelotgui autocompleter will present you with a hint and you can save a fraction of a second by selecting the first item so you don’t have to navigate.

Unfortunately you won’t save anything when the statement is SELECT, though, because the SELECT list comes before the FROM clause. That is, the GUI won’t know what table you’re using until you’ve already specified the columns.

Speaking of irritating design, I take this opportunity to mention the syntax:

INSTALL PLUGIN plugin_name SONAME 'plugin_library';

Notice again how the GUI can’t hint about a name at the appropriate point, because it won’t know the library yet. I class this as a violation by the vendor of the advice “general before specific”.

Compression

If there is a “run” of bytes with the same value, then a “run-length encoding” (RLE) algorithm might save space. Thus if if it is likely that 20 columns will all contain 0, then keeping them together is a good idea.

But it’s only an idea. The practical considerations are that (a) there’s no compression (b) the typical compression is some variant of Lempel-Ziv which wouldn’t require the columns to be exactly contiguous, (c) it’s hard to predict what algorithms and byte placements will actually save the most space without trying them all (d) they’re not all available unless you build from source.

Consistency

Consistency between tables: if table #1 has columns in a particular order, then table #2 should too. Imitate the INFORMATION_SCHEMA tables because they are likely to be static, although the collations of string columns are likely to change. I’d add “imitate examples in the manual”, but only if the examples in the manual are themselves consistent. For example the MySQL manual example

CREATE TABLE pet (name VARCHAR(20), owner VARCHAR(20),

species VARCHAR(20), sex CHAR(1), birth DATE, death DATE);

has no explicit primary key, but the columns might be in order of importance and the final column is the one most likely to contain NULL. For example the MariaDB manual example

CREATE TABLE test.accounts ( id INT PRIMARY KEY AUTO_INCREMENT, first_name VARCHAR(25), last_name VARCHAR(25), email VARCHAR(100), amount DECIMAL(15,2) CHECK (amount >= 0.0), UNIQUE (email) ) ENGINE=InnoDB;

has the PRIMARY KEY clause mixed in the column definition but the UNIQUE clause outside the column definition, and has first name before surname.

Consistency between statements: if WHERE clauses often have WHERE a = 5 AND b = 6, or if ORDER BY clauses often have A, B ASC, or if INSERT statements often have (a, b), then the CREATE TABLE definition can serve as a guide by letting users know that a comes before b by design.

Consistency between definitions: if table #1 has one foreign key referencing table #X and then another foreign key referencing table #Y, and table #2 has one foreign key referencing table #y and then another foreign key referencing table #X, then ask yourself why the order of foreign keys in table #2 is not the same as the order of foreign keys in table #1. Ideally the order will be reflected in entity-relationship diagrams.

ocelotgui changes

The ocelotgui 2.4 release is available for download from github.

The debugger feature won’t work with MariaDB 11.5ff for reasons I’ll explain elsewhere. However, if you download the source and build, it will work. Or wait for ocelotgui 2.5 which (I hope) will be released soon.

SQL:2023 and how well MySQL and MariaDB comply

SQL:2023 is now the official SQL standard. I’ll compare some of the new optional features with what MySQL and MariaDB support already, and say whether I judge they are compliant.

At the moment I don’t have draft documents (my membership on the standard committee ended long ago), so I gratefully get my news from Oracle and Peter Eisentraut. It’s early days, I may be making mistakes and may be guessing wrong about what new features are important.

LPAD and RPAD

MySQL and MariaDB examples:

SELECT LPAD('hi',3,'?'); causes '?hi'.

SELECT RPAD('hi',3,'?'); causes 'hi?'.

Judgment: compliant with SQL:2023 optional feature T055.

The third argument is optional and default is the space character. For some reason I’m not seeing the documentation in the manuals, but it is standard behaviour.

LTRIM and RTRIM and BTRIM

MySQL and MariaDB examples:

SELECT LTRIM(' *'); -- causes '*'.

SELECT RTRIM('* '); -- causes '*'.

Judgment: not compliant with SQL:2023 optional feature T056.

Although SQL:2023 now has LTRIM and RTRIM, they are two-argument,

perhaps a bit like Oracle.

Also SQL:2023 has BTRIM for trimming from both ends. Although MySQL and MariaDB have TRIM(BOTH …), it’s not quite the same thing.

VARCHAR instead of VARCHAR(length)

MySQL and MariaDB example:

CREATE TABLE t(s1 VARCHAR(1)); -- (length) is mandatory

Judgment: not compliant with SQL:2023 optional feature T081

A DBMS that supports feature T081 doesn’t have to include a maximum length, it’s up to the implementation, which in this situation could be 65535 bytes. This is unfortunate and it might be my fault, I think I used to insist that length should be mandatory in MySQL.

Although I can use the TEXT data type instead for indefinite-length strings, it has different characteristics.

ANY_VALUE, emphasizing use with GROUP BY

MySQL and MariaDB example:

SELECT s1, s2 FROM t GROUP BY s1;

Judgment: compliant with SQL:2023 optional feature T626, almost, alas

Probably you noticed that s2 is not in the GROUP BY clause, and yet the syntax is legal — any of the original t values could appear.

The standard would yield something similar with different syntax: SELECT s1, ANY_VALUE(s2) FROM t GROUP BY s1;

Or, as Oracle describes it, “[ANY_VALUE] eliminates the necessity to specify every column as part of the GROUP BY clause”.

That means it’s non-deterministic. Usually I’ve cared more about non-standardness. But now that argument is weaker.

ORDER BY, emphasizing use with GROUP BY

MySQL and MariaDB example:

CREATE TABLE t (s1 int, s2 int, UNIQUE (s2)); INSERT INTO t VALUES (1,1),(7,2),(5,3); SELECT s1 FROM t GROUP BY s1 ORDER BY s2;

Judgment: not compliant with SQL:2023 optional feature F868.

I’m probably misunderstanding something here about the intent, but the effect is that something that’s not in the GROUP BY clause can determine the ordering. In this case, because column s3 is unique, it’s maybe not utterly absurd. But in MySQL and MariaDB, the result is not guaranteed to be in order by column s3.

GREATEST and LEAST

MySQL and MariaDB syntax:

SELECT GREATEST(2,1,3); -- result = 3 SELECT LEAST(2,1,3); -- result = 1

Judgment: compliant with SQL:2023 optional feature T054

UNIQUE NULLS DISTINCT

MySQL and MariaDB example:

CREATE TABLE t (s1 INT, UNIQUE(s1)); INSERT INTO t VALUES (NULL),(NULL);

Judgment: partly compliant with SQL:2023 feature F292 unique null treatment

To understand this, your required reading is my old blog post

NULL and UNIQUE.

You have to read the whole thing to understand that the

standard document was not contradictory, and that MySQL

and MariaDB have always been compliant, because …

In a UNIQUE column, you can insert NULLs multiple times.

However, because lots of people never understood, there are implementations where you cannot do so. Therefore feature F292 allows new syntax:

CREATE TABLE t (s1 INT, UNIQUE NULLS DISTINCT (s1)); or CREATE TABLE t (s1 INT, UNIQUE NULLS NOT DISTINCT (s1));

MySQL and MariaDB could easily half-comply by allowing the first option and saying it is the default. (The standard now says the default is implementation-defined.) (A backward step.)

Hexadecimal and octal and binary integer literals

MySQL and MariaDB example:

SELECT 0x4141;

Judgment: not compliant with SQL:2023 optional feature T661

Did you notice that I said “integer literals”? That’s what’s wrong, 0x4141 in standard SQL is now supposed to be an integer, decimal 16705. But MySQL and MariaDB will treat it as a string, ‘AA’.

It is always legal for an implementation to have “non-conforming” syntax that causes “implementation-dependent” results. That’s what happened with 0x4141, in the mists of time. But now, if one decides to “conform” with the syntax of this optional feature, one must change the behaviour drastically: not merely a different value but a different data type.

I suppose that the road to compliance would involve deprecations, warnings, sql_mode changes, etc., lasting years.

Underscore digit separators

MySQL and MariaDB example:

CREATE TABLE t (10_000_000 INT); INSERT INTO t VALUES (1); SELECT 10_000_000;

Judgment: not compliant with SQL:2023 optional feature T662 Underscores in numeric literals

Big numbers like 10,000,000 cannot be expressed in SQL using commas because that looks like three separate values. The solution, which I see was implemented a few years ago in Python, is to express 10,000,000 as 10_000_000 — that is, underscores instead of commas.

In standard SQL, an identifier cannot begin with a digit. But in MySQL and MariaDB, it can, so 10_000_000 is a legal regular identifier. This is another example of an extension that causes trouble in a long term.

There is an idiom about this, usually about chickens: “come home to roost”. As Cambridge Dictionary puts it: “If a past action, mistake, etc. comes home to roost, it causes problems at a later date, especially when this is expected or seems deserved …”

JSON

MariaDB example:

CREATE TABLE t (s1 JSON);

Judgment: partial compliance with SQL:2023 feature T801 JSON data type etc.

I’m not going to try to analyze all the new JSON options because it’s a big subject. Also there’s a CYCLE clause and a new section of the standard, part 16 Property Graph Queries (SQL/PGQ), which I don’t think is appropriate to get into during this comparison of features in the foundation. Maybe some other time.

Score

Actually, despite pointing to some non-compliance, I have to give credit to the people who decided to implement some features long before they became standard. (And I don’t credit myself at all because they appeared before I was a MySQL architect, and in fact I objected to some of them while I was with MySQL AB.)

Well done.

ocelotgui news

The new version of the Ocelot GUI application, ocelotgui, is 2.0. As always the source and executable files are on github. The biggest new feature is charts. I’ll say more in a later blog post.

Update added 2023-06-12: Re ANY_VALUE: see comment by Øystein Grøvlen. As for the question from Federico Razzoli, I cannot give an authoritative answer. Also I’ve been reminded that MariaDB request MDEV-10426 regarding ANY_VALUE is marked as part of MariaDB 11.2 flow, but until then I guess MySQL is a bit ahead.

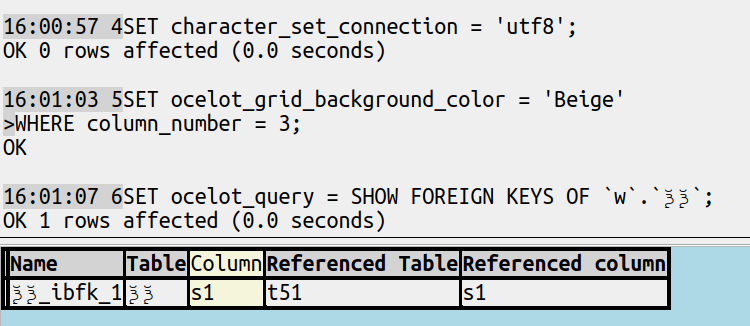

Foreign Key Displays

For foreign keys, I like to see what references what, It’s easy to find but the challenge is to decide how to display.

The cute

Showing a result with the mysql client:

prompt> call show_fk('t2');

+-------------+-----------------+------------+

| referencing | constraint_name | referenced |

+-------------+-----------------+------------+

| t2 | mx | t3 |

| s1 | | s1 |

| s2 | | s2 |

| | | |

| t2 | t2_ibfk_1 | t1 |

| s1 | | s1 |

+-------------+-----------------+------------+

6 rows in set (0.08 sec)

Query OK, 6 rows affected (0.08 sec)

That is, for table T2, I show the table name and foreign-key name and referenced-table name, then the columns of that table in the order they appear in the foreign key, then if there is another foreign key repeat after a blank line.

Here is a long but simple stored procedure that produces such a display.

DROP PROCEDURE show_fk;

CREATE PROCEDURE show_fk(ref_table_name VARCHAR(128))

BEGIN

DECLARE d_table_name VARCHAR(128);

DECLARE d_column_name VARCHAR(128);

DECLARE d_ordinal_position INT;

DECLARE d_constraint_name VARCHAR(128);

DECLARE d_referenced_table_name VARCHAR(128);

DECLARE d_referenced_column_name VARCHAR(128);

DECLARE counter INT DEFAULT 0;

DECLARE err INT DEFAULT 0;

DECLARE x CURSOR FOR

SELECT table_name, column_name, ordinal_position,

constraint_name,

referenced_table_name, referenced_column_name

FROM information_schema.key_column_usage

WHERE table_name = ref_table_name AND referenced_column_name IS NOT NULL

ORDER BY constraint_name, ordinal_position;

DECLARE CONTINUE HANDLER FOR NOT FOUND SET err = 1;

DECLARE CONTINUE HANDLER FOR SQLEXCEPTION set err = 1;

CREATE TEMPORARY TABLE fks (referencing VARCHAR(128),

constraint_name VARCHAR(128),

referenced VARCHAR(128));

OPEN x;

WHILE err = 0 DO

FETCH x INTO d_table_name,d_column_name, d_ordinal_position,

d_constraint_name,

d_referenced_table_name, d_referenced_column_name;

IF err = 0 THEN

IF counter <> 0 AND d_ordinal_position = 1 THEN

INSERT INTO fks VALUES ('', '', '');

END IF;

IF d_ordinal_position = 1 THEN

INSERT INTO fks VALUES (d_table_name, d_constraint_name, d_referenced_table_name);

END IF;

INSERT INTO fks VALUES (d_column_name, '', d_referenced_column_name);

SET counter = counter + 1;

END IF;

END WHILE;

CLOSE x;

SELECT * FROM fks;

DROP TABLE fks;

END;

CALL show_fk('t2');

This is vaguely like an entity-relationship diagram, but with tables rather than pictures.

The flaws are: (1) it needs extra privileges, (2) it mixes different object types. So let’s look at the simpler and more common type of display.

The usual

Everything necessary can come from information_schema.key_column_usage.

For example, there’s a GUI that displays with these columns:

Name | Schema | Table | Column |Referenced Schema | Referenced Table | Referenced Column

That’s easy to reproduce with

SELECT constraint_name AS `Name`, table_schema AS `Schema`, table_name AS `Table`, column_name AS `Column`, referenced_table_schema AS `Referenced Schema`, referenced_table_name AS `Referenced Table`, referenced_column_name AS `Referenced Column` FROM information_schema.key_column_usage WHERE referenced_column_name IS NOT NULL ORDER BY constraint_name, ordinal_position;

Or if that takes too long to type, make a view.

There’s another GUI that displays with these columns:

Key name | Columns | Reference Table | Foreign Columns | On UPDATE | On DELETE

(The “On UPDATE” and “On DELETE” values would have to come from information_schema.referential_constraints.)

The objection that I’d make is that such headers are not standard. So anybody who knows the actual column names has to do a double take, wondering whether the first column is the same as “constraint_name” or something exotic, and so on. Use of multiple different names for the same thing is poetry not programming.

The new

So I think this display, which admittedly makes cosmetic changes (replacing ‘_’ with ‘ ‘ and changing upper case to mixed case and emphasizing one column) is better:

I’ve made it so that can come from user statements or from the explorer. The source code is downloadable now and the released executables will come soon.

Offset and fetch clauses in MariaDB

A new feature in MariaDB 10.6 is “Implement SQL-standard SELECT … OFFSET … FETCH”.

I’ll say what’s not in the manual: mention other vendors, add details, talk style, show how to get the wrong answer, describe WITH TIES, mention alternatives, experiment with locks, and list 11 minor flaws.

Other vendors

Products with support for OFFSET … FETCH:

Oracle, DB2, SQL Server.

Products with support for LIMIT … OFFSET:

Tarantool, MySQL, Vertica, PostgreSQL.

Products with support for neither:

Firebird, ScyllaDB, Actian.

This isn’t a complete list — there are lots of SQL products nowadays — but it does include the “Big Three”. All of them support SELECT … OFFSET … FETCH.

Syntax details

The BNF actually is:

[ OFFSET start { ROW | ROWS } ]

[ FETCH { FIRST | NEXT } [ count ] { ROW | ROWS } { ONLY | WITH TIES } ]

The “start” and count” must be unsigned integers if they’re literals, but if they’re declared variables then they can be NULL (in which case they’re treated as zeros), or can be negative (in which case they’re treated as -infinity for “start” and +infinity for “count”) (but depending on undefined behaviour isn’t always fun).

The lowest offset is zero which is the first row, and the lowest count is zero, that is, it is possible to select zero rows. If count is omitted, then 1 is assumed.

Style

If you haven’t read the descriptive SQL style guide and clicked like, then you won’t understand this section, skip it.

OFFSET and FETCH are both either “clause starts” or “sub-clause starts” so if the choice is to put separate clauses on separate lines, then they are.

Deciding whether to use ROW rather than ROWS, or FIRST rather than NEXT, (neither of which make a difference for server behaviour), one could base a rule on English grammar:

(1) If there was an OFFSET clause with a non-zero number-of-rows, then NEXT, else FIRST. If number-of-rows is greater than one, or unknown, then ROWS, else ROW.

or one could base a rule on what comes first in the MariaDB manual BNF:

(2) The BNF has {FIRST|NEXT} and {ROW|ROWS} so FIRST and ROW.

or one could base a rule on what the examples in the manual use most often:

(3) FIRST and ROWS

or one could base a rule on whatever is shortest:

(4) NEXT and ROW.

I like (3), for example

OFFSET 5 ROWS FETCH FIRST 1 ROWS ONLY;

but there’s no established rule yet so I’ll vary the format in what follows.

How to get the wrong answer

Try this:

CREATE TABLE td (char_1_column CHAR(1) DEFAULT '1',

null_column INT,

char_2_column CHAR(2));

CREATE INDEX int_char_1_column_plus_char_2_column

ON td (char_1_column, char_2_column);

INSERT INTO td (char_2_column)

VALUES ('a'),('b'),('c'),('d'),('e'),('ab');

SELECT char_2_column, null_column

FROM td

WHERE char_1_column > '0'

OFFSET 0 ROWS

FETCH FIRST 4 ROWS ONLY;

SELECT char_2_column, null_column

FROM td WHERE char_1_column > '0'

OFFSET 4 ROWS

FETCH FIRST 4 ROWS ONLY;

Result of first SELECT:

(‘a’,NULL),(‘ab’,NULL),(‘b’,NULL),(‘c’,NULL)

Result of second SELECT:

(‘e’,NULL),(‘ab’,NULL)

So there’s no (‘d’,NULL) and there’s a duplicate (‘ab’,NULL). Wrong answer.

I don’t think it’s a bug because, in the absence of an ORDER BY statement, there is no guarantee which rows will be skipped in the second SELECT. But luckily it’s due to an exotic situation which is easy to avoid: the combined index on a non-unique column and a multi-character string column. Just don’t use the index, and all is well.

WITH TIES

The effects of the new syntax are mostly the same as with LIMIT … OFFSET, but WITH TIES is new.

Suppose there are 6 rows, selected in order (there must be an ORDER BY clause):

(NULL),(NULL),(1),(1),(2),(2).

Then OFFSET 0 ROWS FETCH NEXT 1 ROWS WITH TIES will gives us (NULL),(NULL).

Thus WITH TIES doesn’t mean that the two values are technically “equal”, they merely must be peers, so NULLS tie. The interesting thing is that when you ask for “NEXT 1 ROW” you get the next 2 rows. But that only applies to FETCH, it doesn’t apply to OFFSET, therefore this could be a mistake:

SELECT * FROM t ORDER BY pk OFFSET 0 ROWS FETCH NEXT 1 ROWS WITH TIES; SELECT * FROM t ORDER BY pk OFFSET 1 ROWS FETCH NEXT 1 ROWS WITH TIES;

With the example 6 rows, the second SELECT will return a row that was already returned for the first SELECT. It would be better to say, instead of “OFFSET 1 ROWS”, “OFFSET (number of rows returned by the previous SELECT) ROWS”.

Alternatives

A standard way to fetch only one row:

BEGIN NOT ATOMIC DECLARE v INT; DECLARE c CURSOR FOR SELECT * FROM t; OPEN c; FETCH NEXT FROM c INTO v; END;

A non-standard way to fetch only one row: (remember to restore @@sql_select_limit later):

SET sql_select_limit = 1; SELECT * FROM t;

A way to offset by 10 and fetch 10 (this assumes that pk has all the integers in this range i.e. it depends on user knowing about contents and indexes, and assumes that user only wants to go forward without skipping)

SELECT * FROM t WHERE pk > 10 AND pk <= 10 + 10;

A way to let the user do the offsets and fetches (this depends on the client being a GUI and the user knowing how to click on a scroll bar)

SELECT * FROM t;

… although users often only look at the first few pages (think of how often you use Google and only click Next a few dozen times).

Locking

Will locking, or SET TRANSACTION ISOLATION LEVEL, guarantee consistency?

For a test, I started two clients. On the first, I said:

CREATE TABLE pks (pk INT PRIMARY KEY);

BEGIN NOT ATOMIC

DECLARE v INT DEFAULT 0;

WHILE v <= 10000 DO

INSERT INTO pks VALUES (v);

SET v = v + 1;

END WHILE;

END;

SET @@autocommit=0;

SET TRANSACTION ISOLATION LEVEL SERIALIZABLE;

START TRANSACTION;

SELECT * FROM pks

WHERE pk > 400

OFFSET 10 ROWS

FETCH FIRST 2 ROWS ONLY FOR UPDATE;

On the second, I said:

SET @@autocommit=0; SET TRANSACTION ISOLATION LEVEL SERIALIZABLE; START TRANSACTION; DELETE FROM pks WHERE pk = 7227; DELETE FROM pks WHERE pk = 405; DELETE FROM pks WHERE pk = 411;

Result: The first DELETE succeeds, the second DELETE hangs until I say ROLLBACK; on the first client. The third DELETE would also have hung if I had said it first.

In other words: the rows between 401 and 410 (the rows skipped due to OFFSET) are locked, and the rows between 411 and 412 (the rows picked up by FETCH) are locked, but the rows after 412 (the rest of the rows matching WHERE) are not locked.

What this means, of course, is that ordinarily you can’t be certain that you’re paging through a consistent set. If this is a concern (it shouldn’t always be), make a copy on either the server or the client.

Minor Flaws

A few incompatibilities with standard SQL or another DBMS or another bit of MariaDB 10.10 syntax.

Table t has 4 rows created thus:

CREATE TABLE t (s1 INT, s2 INT); INSERT INTO t VALUES (1,2),(2,1),(3,2),(4,1);

1.

WITH x AS (SELECT * FROM t OFFSET 5 ROWS) SELECT * FROM x;

Result: Success, all 4 rows in table t are returned. It appears that the OFFSET clause is ignored.

2.

BEGIN NOT ATOMIC DECLARE var1 DECIMAL(0); SELECT * FROM t OFFSET var1 ROWS; END;

Result: Error 1691 (HY000) A variable of a non-integer based type in LIMIT clause

Never mind whether var1 should be accepted. The error message is obsolete, I was not using a LIMIT clause.

3.

BEGIN NOT ATOMIC DECLARE var1 BIT DEFAULT NULL; SELECT * FROM t OFFSET var1 ROWS; END;

Result: Success, all rows are returned.

Sometimes non-integer variables are illegal, but here a BIT variable is legal.

MariaDB calls BIT a numeric data type but SELECT * FROM t OFFSET b’1′ ROWS wouldn’t work.

4.

SET sql_mode='oracle';

CREATE PROCEDURE p2 AS

var1 NUMBER := 1;

BEGIN

SELECT * FROM t OFFSET var1 rows;

END;

(Don’t forget to restore sql_mode later.)

Result: Error 1691 (HY000) A variable of a non-integer based type in LIMIT clause

That’s right for standard SQL (“The declared type … shall be an exact numeric with scale 0”) but Oracle says it might be more forgiving (“If rowcount includes a fraction, then the fractional portion is truncated.”)

5.

SELECT * FROM t OFFSET 1 ROWS FETCH FIRST 0 ROWS ONLY;

Result: Success, zero rows are returned.

That’s wrong for standard SQL, which would return “data exception — invalid row count in fetch first clause”.

6.

SELECT * FROM t FETCH FIRST 1 ROWS ONLY;

Result: Success.

But the manual’s BNF is

OFFSET start { ROW | ROWS }

FETCH { FIRST | NEXT } [ count ] { ROW | ROWS } { ONLY | WITH TIES }

which might suggest to newbies that the OFFSET clause is compulsory before FETCH.

7.

SET @x = 1; SELECT * FROM t OFFSET @x ROWS;

Result: Syntax error.

I can find, by saying CREATE TABLE .. AS SELECT @x; and then saying SHOW CREATE TABLE or SELECT … FROM INFORMATION_SCHEMA, that @x is BIGINT. BIGINT declared variables are legal, and I can use variables that start with @ in some other situations. This is how things go when variables have variable types.

8.

SHOW WARNINGS OFFSET 1 ROW;

Result: Success. I believe this is reasonable, and of course any non-standard syntax can be handled in an implementor-defined way. But it’s not documented on the documentation page for SHOW WARNINGS.

9.

BEGIN NOT ATOMIC DECLARE only INT DEFAULT 2e2; DECLARE row INT DEFAULT (SELECT 5); SELECT * FROM t OFFSET ROW ROW FETCH FIRST ONLY ROW ONLY; END;

Result: Success.

In standard SQL:2016 ROW and ONLY are reserved words.

MariaDB tends to accept goofiness since it’s not your nanny, but I believe in egregious cases the standard strictness is preferable after reasonable notice.

10.

SELECT * FROM t FETCH FIRST 1 ROWS ONLY ROWS EXAMINED 1;

Result: Error.

Another inconsistency, since LIMIT 1 ROWS EXAMINED 1 is legal.

11.

VALUES (1),(2),(3) OFFSET 1 ROW FETCH FIRST ROW ONLY;

Result: Success.

This is fine, but once again undocumented.

ocelotgui support

The Ocelot GUI client recognizes MariaDB syntax when connected to a MariaDB server, so if you need features like autocomplete after OFFSET to be correct then download it from github.

Duplicate column names

A former colleague from my MySQL AB days asked me aboutSELECT pk, SUM(amount) AS pk FROM t ORDER BY pk;

should the duplicate use of the name PK be illegal?

My reply is: A relational-database expert deplores it in the select list; the SQL standard says it’s conceptually illegal in the order-by clause; MySQL and MariaDB handle it in an odd way; the Ocelot GUI for MySQL and MariaDB doesn’t flag it.

A relational-database expert deplores

CJ.Date, in Date On Databases, Writings 2000-2006, has a multi-page section about “Duplicate Column Names”. I’ll just quote the firmest negatives.

… we can avoid the duplicate names if we want to. The problem is, we don’t have to, and SQL does not have to deal with the possibility that a given table might have column names that aren’t unique. As an aside, I should explain that … we don’t have to use the “AS column name” construct is because it wasn’t part of the original standard (it was introduced with SQL:1992), and compatibility therefore dictates that its use has to be optional. (I should also note in passing that — believe it or not — names introduced by AS don’t have to be unique!

*Every SQL table has a left-to-right column ordering *Every column in every named SQL table (i.e. base table or view) has a user-known name, and that name is unique within the table in question. But neither of these properties holds, in general, for unnamed tables (i.e. intermediate and final result tables). … I’d like to point out that duplicate and missing column names both constitute a fairly egregious violation of The Naming Principle

So nothing’s ambiguous about Mr Date’s evaluation, but he is acknowledging that SQL won’t be changing due to compatibility with old standards, and at least it wouldn’t allow duplicate column names in the select list of a CREATE VIEW statement.

The SQL standard says it might be illegal

The standard says this about Concepts:

This Clause describes concepts that are, for the most part, specified precisely in other parts of ISO/IEC 9075. In any case of discrepancy, the specification in the other part is to be presumed correct.

So we usually expect a specific syntax rule, but if it’s missing, we maybe can look at Concepts.

And this is SQL:2016, Foundation, 4.13 Columns, fields, and attributes, in the Concepts section:

Sometimes the Syntax Rules forbid two elements at different ordinal positions from having equivalent names (particularly when created through an SQL schema statement). When two elements with equivalent names are permitted, then the element name is ambiguous and attempts to reference either element by name will raise a syntax error.

That suggests that if pk appears twice in select list then ORDER BY pk; should fail, but it’s not explicitly stated in the Syntax Rules. This in contrast with GROUP BY, which has a clear Syntax Rule, that is, “Each grouping column reference shall unambiguously reference a column of the table resulting from the from clause.” Perhaps when I was on the ANSI committee I should have asked why Syntax Rule for ORDER BY doesn’t also say “unambiguously”, but it’s too late now.

MySQL and MariaDB handle it in an odd way

I tried variations of the syntax with MySQL 8.0.31 and MariaDB 10.10.1, sql_mode=only_full_group_by is not true, sql_mode=ansi_quotes is true. They both return the same results.

CREATE TABLE t (pk INT, amount INT); INSERT INTO t VALUES (1,2), (2,1),(1,2),(2,1); SELECT pk, (amount) AS pk FROM t ORDER BY pk;

Result: Error 1052 (23000) Column ‘pk’ in order clause is ambiguous

SQLSTATE values starting with 23 are for “integrity constraint violation”, so it would be better to have something starting with 42 (syntax error or access violation), I described good old ’42’ in an earlier blog post. However, the error message is good and consistent with standard SQL.

SELECT pk, SUM(amount) AS pk FROM t ORDER BY pk;

Result: 1,6.SELECT pk, 5 AS pk, amount FROM t ORDER BY pk,amount DESC;

Result: (1,5,2),(1,5,2),(2,5,1),(2,5,1)SELECT pk, (SELECT SUM(amount) AS pk FROM t) AS "pk" FROM t ORDER BY pk;

Result: (1,6),(2,6),(1,6),(2,6)

These results are harmless — there’s either an implied grouping or a literal for the main sort, so they’re always the same value, so they can be optimized away. Nevertheless, they’re inconsistent — if “Column ‘pk’ in order clause is ambiguous” is detectable, and it’s a syntax error not a runtime error, it shouldn’t have been ignored.

SELECT pk * - 1 AS pk, pk FROM t ORDER by pk;

Result: (-2,2),(-2,2),(-1,1),(-1,1)SELECT pk, pk * - 1 AS pk FROM t ORDER by pk;

Result: (2,-2),(2,-2),(1,-1),(1,-1)

These results are odd, they show that we’re ordering by the pk that has an AS clause, regardless of which column is first in the select list.

SELECT SUM(amount) AS pk, pk FROM t GROUP BY pk ORDER BY pk;

Result: (2,2),(4,1)

Also there is a warning: Column ‘pk’ in group statement is ambiguous. But it does succeed, and once again the item with the AS clause is preferred. This syntax is explicitly declared illegal in standard SQL.

SELECT * FROM (SELECT 1 AS a, 1 AS a) AS t1, (SELECT 1 AS a, 1 AS a) AS t2;

Result: Error 1060 (42S21) Duplicate column name ‘a’

This is the right result, and the SQLSTATE is better because it starts with ’42’.

The reason it’s correct is that Trudy Pelzer long ago reported a bug with duplicate names in subqueries, which are illegal due to a different item in the standard, and Oleksandr Byelkin fixed it.

The Ocelot GUI for MySQL and MariaDB doesn’t flag it

An obvious thought is: since ocelotgui recognizes MySQL/MariaDB syntax, and gives hints or autocompletions while the user is entering a statement, should I be adding code to catch this? After all, we stop most illegal syntax before it goes to the server.

But I’m wary. The behaviour that I describe here is undocumented, so it could change without warning, and besides who are we to reject what the server would accept? It won’t be at the top of the “To Do” pile.

Speaking of “To Do” piles, ocelotgui 1.8 has now been released, and is downloadable from github.

Data type conversion and SQLSTATE

A while ago we got a question about data type conversion and SQLSTATE …

“

Suppose we have data types T1 and T2 (either built-in or user defined).

- This pair is not explicitly listed in the Store Assignment rules.

- There is no a CREATE CAST for this pair defined.

Reading the standard, I have an impression that these statements should fail:

UPDATE t1 SET T1_col=T2_col;

INSERT INTO t1 (T1_col) SELECT T2_col FROM t1;

SET T1_var=T2_var; — inside an SP

But I could not find which exactly error should be raised:

- what should be SQLSTATE

- what should be error message text

A related question:

Is it possible to do CREATE CAST for a pair of two built-in data types?

“

The answer was …

I will be quoting 9075-1:2011 Framework and 9075-2:2016 Foundation.

Framework says:

“In the Syntax Rules, the term shall defines conditions that are required to be true of syntactically conforming SQL language. When such conditions depend on the contents of one or more schemas, they are required to be true just before the actions specified by the General Rules are performed. The treatment of language that does not conform to the SQL Formats and Syntax Rules is implementation-dependent. If any condition required by Syntax Rules is not satisfied when the evaluation of Access or General Rules is attempted and the implementation is neither processing non-conforming SQL language nor processing conforming SQL language in a non-conforming manner, then an exception condition is raised: syntax error or access rule violation.”

… Therefore: if you see the word “shall” in a syntax rule, and the condition is not true, it is a syntax error or access violation.

Foundation says: re set clause list:

“Otherwise, the Syntax Rules of Subclause 9.2, “Store assignment”, are applied with the column of T identified by the <object column> as TARGET and the <update source> of the <set clause> as VALUE.”

Foundation says: re store assignment: syntax rules: where SD = source data type and TD = target data type:

“If TD is character string, binary string, numeric, boolean, datetime, interval, or a user-defined type, then either SD shall be assignable to TD or there shall exist an appropriate user-defined cast function UDCF from SD to TD.”

… Therefore: since the word “shall” has been used and this is a syntax rule: if the condition is not true, it is a syntax error or access violation.

Foundation says: re definition of assignable:

“A binary string is assignable only to sites of binary string type.”

“A number is assignable only to sites of numeric type.”

… And so on. That is, for each data type, there’s a note about what assignable means for that type.

Now take this as an example:

… SET binary_string_target = numeric_source …

According to “A binary string is assignable only to sites of binary string type”, this SD is not assignable to this TD.

Therefore, unless there is an appropriate user-defined cast function, the partial condition “SD shall be assignable to TD” has not been met.

For a moment ignore the other partial condition, “there shall exist an appropriate user-defined cast function”. In that case, now we know that the rule for store assignment, which is required by the rule for set clause list, has not been met. And that rule is a syntax rule that includes the word “shall”.

So it is a syntax error or access rule violation.

Table 38 “SQLSTATE class and subclass codes” says that the class for syntax error or access rule violation is 42.

Thus it’s clear — at last, eh? — that the SQLSTATE class is 42. What about the subclass?

Foundation says:

“NOTE 767 — One consequence of this is that an SQL-implementation may, but is not required by ISO/IEC 9075 to, provide subclasses for exception condition syntax error or access rule violation that distinguish between the syntax error and access rule violation cases.”

In other words, you can say SQLSTATE is 42000 but you can say other things too.

Here I recommend that you look at what DB2 for z/OS has.

“42806 A value cannot be assigned to a variable, because the data types are not compatible.” or

“42821 A data type for an assignment to a column or variable is not compatible with the data type.”

Conclusion: the SQLSTATE can be 42xxx, e.g. 42821, syntax error or access violation.

But there are two loopholes! Long ago, when my job was to say how MySQL was compatible with standard SQL, I used them both.

Loophole #1: See above: “The treatment of language that does not conform to the SQL Formats and Syntax Rules is implementation-dependent.”

Well, whenever MySQL/MariaDB has a type that is not mentioned in the official rules, such as TEXT rather than CHARACTER VARYING, that means you can declare there is no error.

Loophole #2: See above: “or there shall exist an appropriate user-defined cast function”.

Well, there is a cast function whenever MySQL/MariaDB says there is. That is, if Monty declares that you can assign a number to a varchar, while smiling, I would explain: the meaning of the word “user” is implementation-dependent. In this case, the user who created the function is Monty. That means you can declare there is no error.

But, if you declare that you are using the loopholes, then CREATE CAST will be illegal because Foundation requires: “There shall be no user-defined cast for SDT and TDT.” and, alas, where user = Monty, there is a user-defined cast.

Thanks to Alexander Barkov for the question.

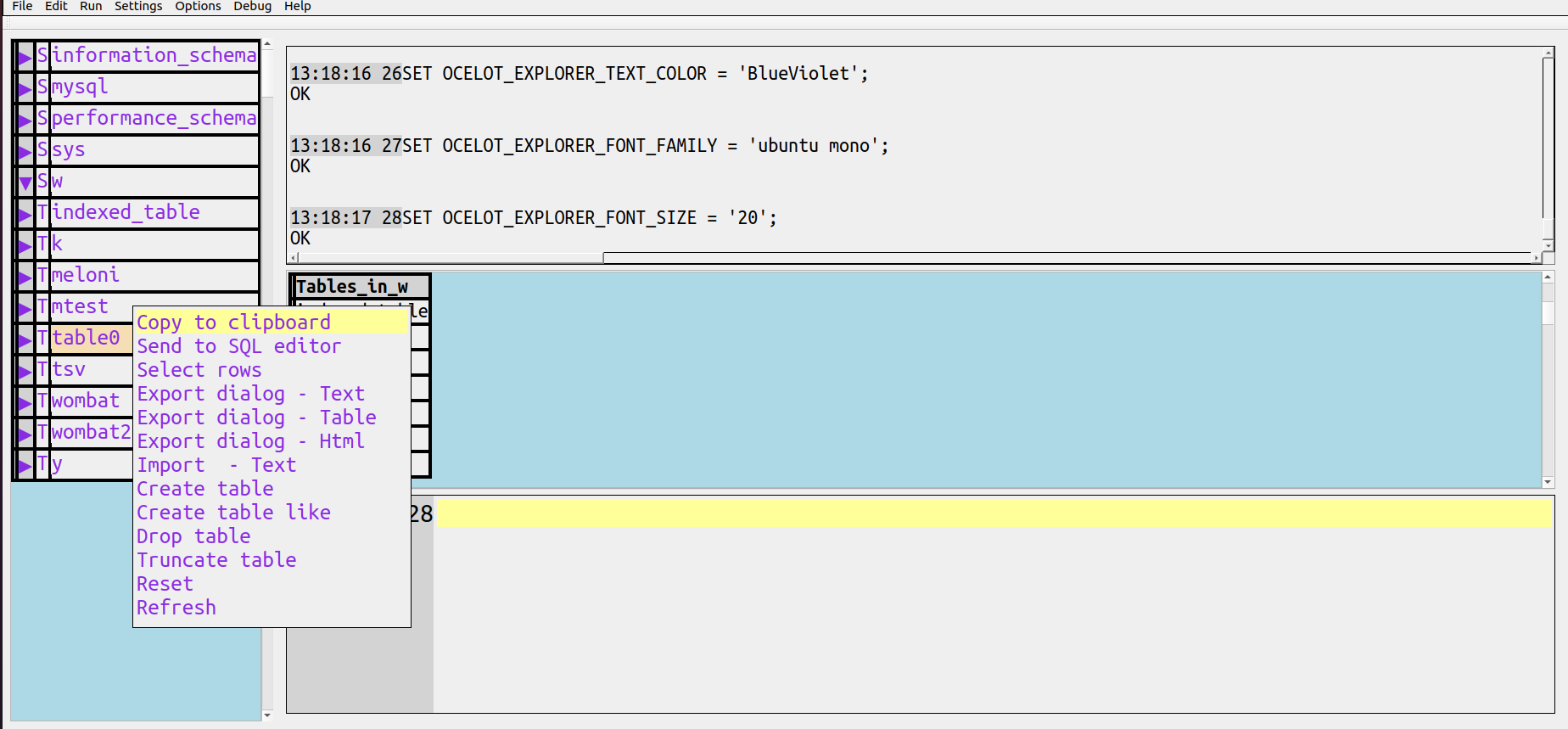

ocelotgui explorer

On an unrelated note, the Ocelot Graphical User Interface has an explorer.

Sometimes called a navigator, and vaguely like what people are used to with Windows Explorer, this is a widget that appears (usually on the left but detachable) showing objects in all schemas that the user can access. If the user right-clicks on an object, an in-context menu appears with SQL statements or client actions that can be performed by clicking. Customizable.

Currently it’s only in source code which you can download from github, as always.

It and its documentation will be in the next release and/or in the next post of this blog.

The SQL substring function

I’ll cover SUBSTRING’s variants, its behaviour in odd cases, and what can go wrong.

I’ll add a C program that emulates what the SQL:2016 standard requires.

Ordinary case

SUBSTRING(value-expression FROM start-position [FOR string-length])

I expect that everyone knows the ordinary case: value-expression should be either a character string or a binary string, start-position and string-length should be integers, and SUBSTRING(‘abc’ FROM 2 FOR 2) will return ‘bc’.

Variation: SUBSTR and/or commas

SUBSTR(value-expression, start-position [, string-length])

Abbreviating SUBSTRING to SUBSTR, with commas instead of words to separate arguments, is Oracle and SQLite syntax. MySQL cheerily accepts both syntaxes and treats them as synonyms.

Informix accepts both but does not treat them as synonyms. DB2 has something similar.

Tarantool has SUBSTR() but will soon change to SUBSTRING().

So when you have a choice, you just have to decide: do you want to be compatible with Oracle, or with almost everybody else?

Variation: passing a non-string and non-integers

In MySQL/MariaDB I can say SUBSTRING(123, 1.5, 1.1) and get ‘2’. The standard requirement is: the first argument must be a string and the others must be exact with scale 0. So rather than accepting decimals and rounding, others will call this an error.

What we’re seeing here is the typical MySQL/MariaDB idea that, if it’s possible to do something with the input, do it. And in this case it’s possible by converting the first argument to a string and rounding the next arguments.

But it’s not a strictly MySQL thing, Oracle and DB2 will also accept non-integers and do implicit conversions.

Variation: negative start-position

This is probably the most frequent variation.

What is SUBSTRING(‘abc’, -2, 2)?

The standard is 100% clear (well, as clear as it ever gets): start-position is 2 characters before the start of the string, and string start is 1, so

SUBSTRING(‘abc’ FROM -2 FOR 2) is a zero-length string but

SUBSTRING(‘abc’ FROM -2 FOR 4) is ‘a’.

SQL Server follows this though I don’t know whether it always does so.

However — perhaps because programmers are used to seeing such stuff in php and perl and Lua — the alternative is to say that negative start-positions are counted backwards from the end of the string, so -1 is the last character, -2 is the second-last, and so on.

So in MySQL SUBSTRING(‘abc’ FROM -2 FOR 2) is ‘bc’ and

SUBSTRING(‘abc’ FROM -2 FOR 4) is also ‘bc’.

But don’t hope for consistency: SUBSTRING(‘abc’ FROM -4 FOR 5) is just a zero-length string.

I’ll admit that the count-backwards variation is popular, since it’s in Oracle and SQLite and MySQL/MariaDB (and in Informix SUBSTR, which is the difference I mentioned earlier). But it’s awfully easy to avoid: use a different function if your DBMS supports RIGHT() or INSTR(), or say

SUBSTRING(‘abc’ FROM LENGTH(‘abc’) – 2 FOR 2).

For that last suggestion, I wondered: won’t that take longer? So I filled a table with long strings.

CREATE TABLE j (s1 TEXT(1000000));

CREATE PROCEDURE p()

BEGIN

DECLARE i INTEGER DEFAULT 0;

WHILE i < 737 DO

INSERT INTO j VALUES (REPEAT('abc',1000000));

SET i = i + 1;

END WHILE;

END;

CALL p();

SELECT SUBSTRING(s1 FROM -2 FOR 2) FROM j;

SELECT SUBSTRING(s1 FROM LENGTH(s1)-2 FOR 2) FROM j;

The SELECT with “FROM -2” takes on average about 12 seconds on my laptop.

The SELECT with “FROM LENGTH(s1)-2” takes on average about 7 seconds.

Your mileage will vary but if you get anything vaguely similar you’ll have to conclude that non-standard negative start positions are unnecessary.

Variation: going past the end

Of course SUBSTRING(‘abc’ FROM 3 FOR 5) is ‘c’ and the nonexistent characters past the end don’t matter. That’s standard, and it’s why I found it inconsistent when I saw different behaviour for nonexistent characters before the start.

But there’s another possible answer: the DB2 incomprehensible result. The documentation for SUBSTR() says that the result is an error, and simultaneously that the string is padded on the right with spaces or zero bytes.

Variation: CHAR in, VARCHAR out

If the input is CHAR, is the result supposed to be CHAR?

The standard is once again clear about this:

“If the declared type of <character value expression> is fixed-length character string or variable-length character string, then DTCSF is a variable-length character string type with maximum length equal to the fixed length or maximum length of DTCVE.”

For Oracle the result is VARCHAR if the input is CHAR.

For SQL Server the result is VARCHAR if the input is CHAR.

For DB2 the result is VARCHAR if the input is CHAR.

For MySQL the result is undocumented so I used my usual way of finding out what the data type of a result is …

CREATE TABLE j (s1 CHAR(5)); CREATE TABLE j2 AS SELECT SUBSTRING(s1 FROM 2 FOR 2) FROM j; SELECT table_name, data_type FROM information_Schema.columns WHERE column_name = 's1';

Result: ‘char’. Yes, I know, SHOW CREATE TABLE j2; would say ‘varchar’, but I don’t rely on it here. If you set sql_mode=’pad_char_to_full_length’; and then select length(column_name) from all rows in the table, you’ll see it’s always the defined length.

Variation: octets

SUBSTRING(… FROM … FOR … [USING CHARACTERS|OCTETS])

By now you’ve gathered that my main concern is SUBSTRING for characters more than SUBSTRING for binaries, but the standard says I can specify the position and length in bytes rather than characters.

So, if you’ve got a multi-byte character, and you use OCTETS, you can split it so that the result is not valid characters. For example: starting with ‘Д’ in UTF-8 (which is encoded as 0D94):

SUBSTRING(‘Д’ FROM 2 FOR 1 USING OCTETS)

is X’94’ which is not a UTF-8 character that was in the input.

DB2 covers this up by replacing invalid character fragments with spaces.

For the standard, for the newer substring function added in SQL:2016, I read:

“If [character length units] is OCTETS and the [relevant] octet of STR is not the first octet of a character, then the result of [this function] is implementation-dependent.”

That certainly seems like the appropriate thing to say about all substring functions.

Variation: added in SQL:2016

These SUBSTRING syntaxes which are part of SQL:2016 might someday be interesting:

<regular expression substring function> ::=

SUBSTRING <left paren> <character value expression> SIMILAR <character value expression>

ESCAPE <escape character> <right paren>

<regex substring function> ::=

SUBSTRING_REGEX <left paren>

<XQuery pattern> [ FLAG <XQuery option flag> ]

IN <regex subject string>

[ FROM <start position> ]

[ USING <char length units> ]

[ OCCURRENCE <regex occurrence> ]

[ GROUP <regex capture group> ]

<right paren>

… but as of today they seem to have not caught fire in popular imagination.

Variation: zero-length strings can be errors

The obsolete manual for Oracle 10g says “When you specify a value that is less than 1, the function returns NA.” which I guess means it was an error long ago. Now the return is null because Oracle doesn’t distinguish it from a zero-length string.

In a draft of an earlier SQL standard, one might read:

“16) If the result of <string value expression> is a zero-length character string, then it is implementation-defined whether an exception condition is raised: data exception — zero-length character string”

But that, in the section about <string value function>, didn’t fit well. It was replaced by

“16) If the result of <string value function> is the zero-length character string or the zero-length binary string, then it is implementation-defined whether an exception condition is raised: data exception – zero-length character string or data exception – zero-length binary string, respectively.”

So: if the result of SUBSTRING() is ”, then it’s not a violation of the standard to return an error. However, I don’t expect that anybody would say that unless they did the same for everything, not just SUBSTRING().

C program that does what the standard says

I said the standard document is clear, but it is also intimidating. The main clause about SUBSTRING for characters looks like this:

3)If <character substring function> is specified, then:

a) If the character encoding form of <character value expression> is UTF8, UTF16, or UTF32, then, in

the remainder of this General Rule, the term "character" shall be taken to mean "unit specified by

<char length units>".

b) Let C be the value of the <character value expression>, let LC be the length in characters of C, and

let S be the value of the <start position>.

c) If <string length> is specified, then let L be the value of <string length> and let E be S+L. Otherwise,

let E be the larger of LC + 1 and S.

d) If at least one of C, S, and L is the null value, then the result of the <character substring function> is

the null value.

e) If E is less than S, then an exception condition is raised: data exception - substring error.

f) Case:

i) If S is greater than LC or if E is less than 1 (one), then the result of the <character substring

function> is the zero-length character string.

ii) Otherwise,

1) Let S1 be the larger of S and 1 (one). Let E1 be the smaller of E and LC+1. Let L1 be

E1-S1.

2) The result of the <character substring function> is a character string containing the L1

characters of C starting at character number S1 in the same order that the characters appear

in C.

It’s not something that humans can grasp immediately. But notice that, in English, it’s declaring variables and conditional-execution statements. So for testing purposes it’s straightforward to convert the English into C. That’s what I’ve done for this section. Here is a stand-alone program that replicates all the important parts of the clause so that anyone can see what SUBSTRING() would produce for any combination of

SUBSTRING(value-expression FROM start-position [FOR string-length])

/*

Simulate ISO 9075-2:2016 description of substring

By Peter Gulutzan 2021-08-30

Copyright (c) 2021 by Peter Gulutzan. All rights reserved.

To compile: gcc -o substring substring.c

To run, with 2 or 3 args: substring character_value_expression start_position [string_length]

Assumption: start_position and string_length are integers, there is no validity check.

Convention: if character_value_expression is - then we treat it as an empty string.

Example:

pgulutzan@pgulutzan-VirtualBox:~/tarantool_sandbox$ ./substring 'abc' -1 1

character_value_expression: abc.

start_position: -1

string_length: 1

LC: 3

S: -1

L: 1

E: 0

S > LC or E < 1. Return zero-length string

*/

#include <stdlib.h>

#include <stdio.h>

#include <string.h>

#include <stdbool.h>

int main( int argc, char *argv[] )

{

if ((argc < 3) || (argc > 4))

{

printf("We want: character_value_expression start_position string_length\n");

exit(0);

}

bool is_length_specified;

char *character_value_expression= argv[1];

int start_position= atoi(argv[2]); /* no validity check */

int string_length;

if (argc == 3)

{

is_length_specified= false;

}

else

{

is_length_specified= true;

string_length= atoi(argv[3]); /* no validity check */

}

printf("character_value_expression: %s.\n", character_value_expression);

printf("start_position: %d\n", start_position);

if (is_length_specified == false)

{

printf("string_length: not specified\n");

}

else

{

printf("string_length: %d\n", string_length);

}

char *C= character_value_expression;

int LC= -99, S= -99, E= -99, L= -99, S1= -99, E1= -99, L1= -99;

if (strcmp(character_value_expression, "-") == 0) LC= 0;

else LC= strlen(C);

printf("LC: %d\n", LC);

S= start_position;

/* c) If <string length> is specified, then let L be the value of <string length> and let E be S+L. Otherwise,

let E be the larger of LC + 1 and S. */

if (is_length_specified == true)

{

L = string_length;

E= S + L;

}

else

{

if ((LC + 1) > S) E= LC + 1;

else E= S;

}

printf("S: %d\n", S);

printf("L: %d\n", L);

printf("E: %d\n", E);

/* e) If E is less than S, then an exception condition is raised: data exception -- substring error. */

if (E < S)

{

printf("E < S. data exception -- substring error.\n");

exit(0);

}

/* f) i) If S is greater than LC or if E is less than 1 (one), then the result of the <character substring

function> is the zero-length character string. */

if ((S > LC) || (E < 1))

{

printf("S > LC or E < 1. Return zero-length string\n");

exit(0);

}

/* f) ii) 1) Let S1 be the larger of S and 1 (one). Let E1 be the smaller of E and LC+1. Let L1 be

E1-S1. */

if (S > 1) S1= S;

else S1= 1;

if (E < (LC + 1)) E1= E;

else E1= LC + 1;

L1= E1 - S1;

printf("S1: %d\n", S1);

printf("E1: %d\n", E1);

printf("L1: %d\n", L1);

/* f) ii) 2 The result of the <character substring function> is a character string containing the L1

characters of C starting at character number S1 in the same order that the characters appear

in C. */

for (int i= S1 - 1; i < (S1 - 1) + L1; ++i)

{

printf("%c\n", *(C + i));

}

}

ocelotgui progress

The open-source GUI client program is getting constantly better. The next big feature will be “Export” to a variety of formats including delimited, boxed, html, and default. The preliminary code has been uploaded to our github repository.

The README for the current version (1.5) is here for MySQL/MariaDB.

Or, here for Tarantool.

The SQL MOD Function

What should be the result of -11 % 4, or MOD(11, -4)?

This has been controversial and you will get different results in different languages. However, in SQL there is only one correct answer, the one that the SQL standard requires. Good news: your favourite SQL DBMS gives the correct answer. But what it is, and what the proof is, will need lots of explaining.

Modulus moduli modulo

Oxford English Dictionary entry for modulus: “… 2b A whole number used as a divisor in a system of arithmetic (modular arithmetic) in which integers having the same remainder when divided by this number are regarded as equivalent. Cf. congruent adj. 5, modulo prep., residue n. 3b.”

Oxford English Dictionary entry for modulo: “Origin: A borrowing from Latin. Etymons: Latin modulō, modulus. Etymology: < classical Latin modulō, ablative of modulus modulus n. Compare earlier mod prep. The preposition was first used in this sense in a Latin context by Gauss in 1801 (see note s.v. mod prep.). … a. With respect to a modulus of (a specified value). See modulus n. 2b.”

Carl Friedrich Gauss wrote Disquisitiones Arithmeticae in Latin. In Latin, moduli is the plural of modulus and modulo is the ablative. In this context the ablative modulo indicates “by means of the modulus”.

And so R = N MOD M means get a result R by applying to a number N “by means of the modulus M“. In SQL this is the standard function

MOD(N, M)

or the common operator

N % M.

I highlight the fact that the modulus here is M — sometimes I’ve seen hints that people think the modulus is the result of the function, but that’s not the original meaning. There are good explanations of “modular arithmetic” on the Khan Academy site and in Encyclopedia Britannica which use the term in the original way, and use the common illustration of a circular clock that has 12 hours marked on it:

If it is 7 o’clock, and you add 7 hours, then it is 2 o’clock. It doesn’t mean 7+7=2, it means 7+7 and 2 are “congruent” by means of modulus 12. So are (7+7+12) and 2, (7+7+12*2) and 2, and so on. The mod function should answer: what’s the congruent number that fits on the clock?

Anybody can see that, but real-world examples of a “negative clock” are harder to find. I can think of a clock that counts down before a rocket launch, but it doesn’t go in a cycle.Nevertheless, if it did, then the hours are not from 0 to 11, they are from 0 to -11. Now, if you add 7 hours to “minus 7 o’clock” or if you subtract 7 hours from “minus 7 o’clock”, arguably you should get a negative number, as no positive number fits on a negative clock.

On the other hand, people want to use this function to get remainders and remainders are normally positive numbers regardless what you use as a divisor. So what’s desirable?

The three main possibilities

Daan Leijen wrote “Division and Modulus for Computer Scientists” following some work by Knuth and Wirth and others. It is not in Latin. But it does have some mathematical symbols, which I’ll translate …

Assume divisor <> 0.

(1) the quotient is a member of the set of integers.

(2) Dividend = divisor times quotient + remainder.

(3) ABS(remainder) < ABS(divisor).

Those are the universal rules. The additional rules can be:

(a) “Truncate”, i.e. trunc(dividend / divisor) – (dividend * result)

(b) “Floor”, i.e. it’s the remainder of round(quotient) towards infinity)

(c) “Euclidean”, i.e. what Mr Leijen regards as correct, see his lengthy explanation, but he admits it’s rarely used.

The key illustration is Mr Leijen’s “Comparison of T-, F- and E-division”

D,d qT,rT qF,rF qE,rE --- ----- ----- ----- (+8,+3) (+2,+2) (+2,+2) (+2,+2) (+8,−3) (−2,+2) (−3,−1) (−2,+2) (−8,+3) (−2,−2) (−3,+1) (−3,+1) (−8,−3) (+2,−2) (+2,−2) (+3,+1)

where T is Truncate, F is Floor, E is Euclidean, D is Dividend, d is divisor, q is quotient, r is remainder,

The exciting part is that when the Dividend is negative (-8,+3 or -8,-3), or when the divisor is negative (+8,-3 or -8,-3) the column with “rT”, i.e. remainder with Truncate, is not the same as the column with “rF”, i.e. remainder with Floor.

Modern C goes with (a) Truncate, which tends to be well supported by the processor. If you write and run this program:

#include <stdio.h>

void main()

{

printf("%d %d %d %d\n", ( 11)%( 4), ( 11)%(-4), (-11)%( 4), (-11)%(-4));

}

You will see: 3 3 -3 -3. Ditto Java and many other languages. But Lua uses (b) Floor and there is an argument for it in “A small practical example where Lua’s % behavior is better than C’s”. Also Python uses (b) Floor; Guido van Rossum explains in “Why Python’s Integer Division Floors”.

Lisp has separate mod and rem functions. Maybe that was for the best. But it’s too late for us.

What SQL vendors do

A copy-and-paste from the ocelotgui client’s History widget:

mysql>SELECT ( 11)%( 4) , ( 11)%(-4) , (-11)%( 4) , (-11)%(-4); OK 1 rows affected (0.5 seconds) +------------+------------+------------+------------+ | ( 11)%( 4) | ( 11)%(-4) | (-11)%( 4) | (-11)%(-4) | +------------+------------+------------+------------+ | 3 | 3 | -3 | -3 | +------------+------------+------------+------------+

Same in MariaDB. Same in Tarantool. Same in PostgreSQL. Same in Oracle … but Oracle warns: this is not the “classical” modulus operation. Then Oracle illustrates what they call “classical” and, it’s obvious from that illustration, they mean Floor. IBM uses the same example.

Therefore the overwhelming opinion among SQL vendors is (a) Truncate.

What the standard says

There is no % operator. There is a MOD() function.

"

<modulus expression> ::=

MOD <left paren>

<numeric value expression dividend>

<comma>

<numeric value expression divisor>

<right paren>

...

If <modulus expression> is specified,

then the declared type of each

<numeric value expression>

shall be exact numeric with scale 0 (zero).

The declared type of the result is the

declared type of the immediately contained

<numeric value expression divisor>.

...

9)If <modulus expression> is specified,

then let N be the value of the immediately

contained <numeric value expression

dividend> and let M be the value of the

immediately contained <numeric value

expression divisor>.

Case:

a)If at least one of N and M is the null

value, then the result is the null value.

b)If M is zero,

then an exception condition is raised:

data exception—division by zero.

c)Otherwise, the result is the unique exact

numeric value R with scale 0 (zero) such

that all of the following are true:

i)R has the same sign as N.

ii)The absolute value of R is less than

the absolute value of M.

iii)N = M * K + R for some exact numeric

value K with scale 0 (zero).

“

Look again at Mr Leijen’s universal rules, above. You’ll notice that the numeric-with-scale-0 requirement is equivalent to “(1) the quotient is a member of the set of integers.”, and you’ll notice that clause b) is equivalent to “Assume divisor <> 0”, and you’ll notice that clause c)iii) is equivalent to “(2) Dividend = divisor times quotient + remainder”, and you’ll notice that clause c)ii) is equivalent to “(3) ABS(remainder) < ABS(divisor).” So, except for clause c)i), all the rules are the rules that he described.

So what is -11 % 4?

It is the same as MOD(-11, 4).

Therefore -11 is the dividend and 4 is the divisor.

Therefore -11 is N and 4 is M.

a) does not apply because neither M nor N is NULL.

b) does not apply because M is not zero.

c) means for the expression N = M * K + R, we must solve for K and R.

Plugging in the values of N and M, we have

-11 = 4 * K + R.

Since R must have the same sign as N, R must be negative.

Therefore K must be negative!

To see why, plug in any value of K which is >= 0. For example:

-11 = 4 * 1 + -15

No. This violates c)ii) because ABS(-15) > ABS(4).

So now we know that

-11 = 4 * (K which is a negative number) + (R which is a negative number).

Let us try with K >= -1:

-11 = 4 * (-1) + (-7)

No. This violates c)ii) because ABS(-7) > ABS(4).

Okay, how about if K <= -3:

-11 = 4 * (-3) + (+1)

No. This violates c)i) because N is negative and R is positive.

Okay, how about K = -2:

-11 = 4 * (-2) + (-3)

This does not violate c)i) because N is negative and R is negative.

This does not violate c)ii) because ABS(-3) < ABS(4).

Great, so R = -3.

Thus it is established by example that the standard requires (a) Truncate.

Using non-standard extensions

Although in standard SQL the correct action for MOD(N, 0) is an error “division by zero”, not every vendor does that — they might return N or they might return NULL.

Although standard SQL MOD() is for integers, some vendors allow FLOAT or DECIMAL. And here is a trick: To get the scalar part of a DECIMAL number:

5.5 % 1

result should be .5.

Perhaps you are thinking “Nonsense! The correct way is

5.5 - FLOOR(5.5)

but I think it depends whether you want to preserve the sign.

Although the standard says that the result data type should be the same data type as M, some vendors will return a value with different precision and scale.

New ocelotgui version

Version 1.1.0 of ocelotgui was released on July 31. So head over to our github site now and download.

Descriptive SQL Style Guide

I have added a large new file on github:

It is open source and I emphasize it is “descriptive” because I am describing rather than prescribing.

Convert SQL Stored Procedures to Lua Functions

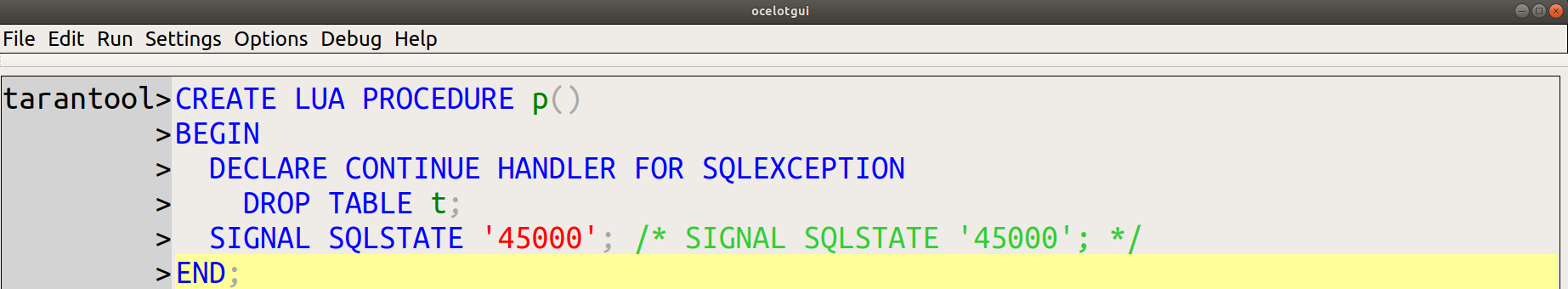

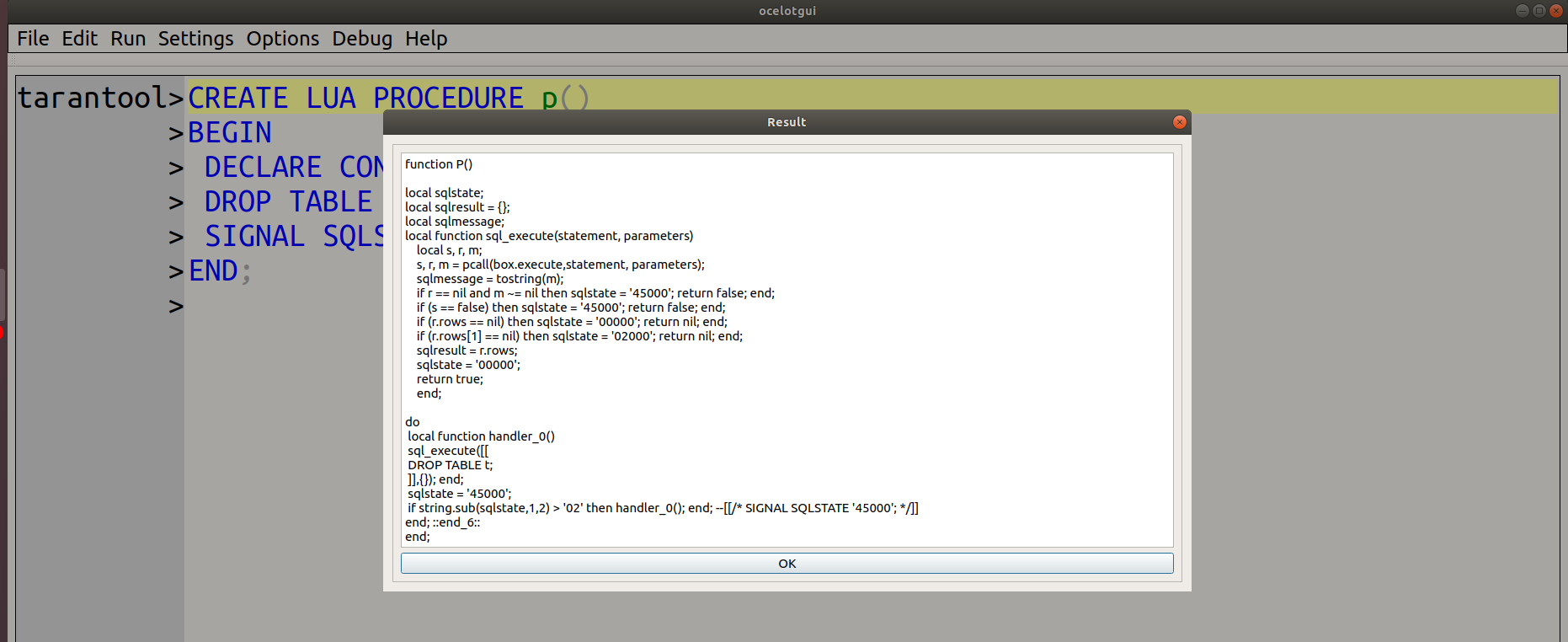

I have written code that converts SQL stored procedures to Lua functions. This might interest people who want to know what’s involved for any C-like target language. Here I will display one step at a time, emphasizing the “Design Decisions” that I had to make, giving increasingly complex examples. Then I’ll note the limitations and say how to get the source code. Alpha.

Frame

CREATE LUA PROCEDURE p() BEGIN /* Empty block */ END;

The word LUA is just a signal that this is a translation job; everything following it is expected to be standard SQL or a supported dialect.

I already had a good

recognizer so parsing the input is easy. And, so far, it’s easy to find Lua syntax analogous to SQL syntax. The result is:

function P() do --[[/* Empty block */]] end; end;

Design Decision #1: I’ll try to preserve comments and punctuation as in the original, although it’s not necessary.

Design Decision #2: SQL’s BEGIN … END can become Lua’s do … end

The alternative was Lua’s while true … break; end but that could have confused some people.

Variables

CREATE LUA PROCEDURE p() BEGIN DECLARE i INTEGER; DECLARE "Peter's string" VARCHAR(4); END;

DECLARE + variable-name is easy because Lua declarations only need a name. Occasionally we have to do some extra processing though.

Result:

do local I; local Peter_39_s_32_string_33_; end; end;

Design Decision #3: I convert regular identifiers to upper case because that’s how SQL does things. This isn’t common practice in Lua.

Design Decision #4: I change delimited (quoted) identifiers by changing all special characters to _s but keeping the original case, and then we add a number to ensure the name is unique. Detail: _33 means “the definition was at token number 33 in the CREATE PROCEDURE statement; I use token numbers frequently to make sure I’m making something unique.

Design Decision #5: I don’t preserve the data type. Lua doesn’t need it often and when it does I can use Lua’s type() function to see whether somethingis a number or a string … except if the value is nil. Nil values will confuse us and users should try to avoid them. The alternative, though, was to store the data type and null status separately for every declared variable, and I think (well, “hope”) that won’t be necessary in practice.

Executing

CREATE LUA FUNCTION f() RETURNS INTEGER BEGIN DECLARE i INTEGER DEFAULT 1; RETURN i + 3; END;

Now we get into SQL statements that must be called from Lua. For this we will use a function named sql_execute(), which is a wrapper around code that is specific to a particular DBMS. The job of sql_execute is to:

Pass parameters.

Send a statement to the DBMS server (for example with mysql_real_query if the DBMS is MySQL/MariaDB).

NB: sending should be done with Lua pcall because results must always be intercepted.

Check return values:

(If there was an error) set sqlstate to error, for example ‘45000’, and return false.

(If there was a result set)

Copy the result set to sqlresult.

(If the result set is empty) set sqlstate to not found, for example ‘02000’, and return nil.

(If the result set is not empty) set sqlstate to okay, for example ‘00000’, and return true.

(If there was no result set) set sqlstate to okay, for example ‘00000’, and return true.

The job of the function calling sql_execute is to:

Define sqlstate (a string) and define sqlresult (a table) accessible throughout the function.

Call sql_execute with whatever parameters are necessary.

Check what sql_execute returns, if necessary.

Result:

function F()

local sqlstate;

local sqlresult = {};

local sqlmessage;

local function sql_execute(statement, parameters)

--[[not illustrated here, see description]]

end;

do

local I; sql_execute([[SELECT 1;

]],{}); I = sqlresult[1][1];

sql_execute([[

SELECT ? + 3;

]],{I}); return sqlresult[1][1];

end; ::end_8::

end;

For the DEFAULT 1 clause, the function will call on the DBMS to execute “SELECT 1;”, and the result will be in the first column of the first row of sqlresult table, so I = select-result. There are no parameters in this case, which is why ,{} appears.

For the RETURN i + 3 statement, the function will call on the DBMS to execute “SELECT ? + 3;”, and since the ? is a placeholder for a parameter there will have to be a parameter, which is why ,{I} appears. Once again sql_execute() will put the result set, which happens to be one row with one column, in sqlresult. So return sqlresult[1][1] will cause the function to return 4.

Design Decision #6: all expressions will go to SQL for evaluation. Certainly for the example here it would be possible to do the addition in Lua, but when expressions contain SQL functions or operators we have to get SQL to do it. So, for simplicity, I always ask SQL to do it.

Design Decision #7: I’ll assume that sql_execute can’t fail for statements that are assigning, like DEFAULT and RETURN. This isn’t necessarily true, but I figured: if there was something wrong with the expression, it would have failed already.

Getting Out of a block

CREATE LUA PROCEDURE p()

BEGIN

BEGIN

CREATE TABLE t (s1 VARCHAR(4) PRIMARY KEY);

INSERT INTO t VALUES ('a');

INSERT INTO t VALUES ('b');

END;

END;

Adding statements in a BEGIN … END causes the usual sql_execute() calls, but also some goto instructions.

Result:

function P()

local sqlstate;

local sqlresult = {};

local sqlmessage;

local function sql_execute(statement, parameters)

--[[See earlier description.]]

end;

do

do

sql_execute([[

CREATE TABLE t (s1 VARCHAR(4) PRIMARY KEY);

]],{});

if string.sub(sqlstate,1,2) > '02' then goto end_7; end;

sql_execute([[

INSERT INTO t VALUES ('a');

]],{});

if string.sub(sqlstate,1,2) > '02' then goto end_7; end;

sql_execute([[

INSERT INTO t VALUES ('b');

]],{});

end; ::end_7::

end; ::end_6::

end;

The instruction

if string.sub(sqlstate,1,2) > '02' then goto end_7; end;

is my substitute for SQL’s implicit action

“Get out of the BEGIN … END block if there is an error”.

Remember that sql_execute() returns an sqlstate value, and the first two characters of this value must be greater than ’02’ if there is an error. So the goto will go to a point just past the end of the inner Lua do … end block, which is marked by a label ::end_7::. This isn’t necessary for INSERT INTO t VALUES (‘b’); because it is the last thing in the block, so it will pass out of the block anyway.

Design Decision #8: goto is the best way to get out of the block. The alternatives were: (a) use while true … end so that break will get out of the block, as discussed earlier, (b) put “if string.sub(sqlstate,1,2) <= '02'" before every sql_execute() call in the block. Although the alternatives would work in this example, I decided they make the code unreadable when the examples get complicated.

Unconditional Flow control

CREATE LUA PROCEDURE p()

BEGIN

label_1:

LOOP

ITERATE label_1;

LEAVE label_1;

END LOOP;

END;

I can replace SQL’s

LOOP ... END LOOP

with Lua’s

while true ... end

. I can replace SQL’s ITERATE with a goto that goes to just before the end, so that the loop repeats. I can replace SQL’s LEAVE with a goto that goes to just after the end, so that the loop ends.

Result:

function P()

local sqlstate;

local sqlresult = {};

local sqlmessage;

local function sql_execute(statement, parameters)

--[[See earlier description.]]

end;

do

while true do

goto LABEL_1_1;

goto LABEL_1_2;

::LABEL_1_1::end;::LABEL_1_2::

end; ::end_6::

end;

Since the ITERATE-derived “goto LABEL_1_1;” skips past the LEAVE-derived “goto LABEL_1_2;” this is an infinite loop. Its only saving grace is that it is valid Lua code.

Conditional Flow Control

CREATE LUA PROCEDURE p()

BEGIN

DECLARE i INTEGER DEFAULT 1;

WHILE i < 5 DO

INSERT INTO t VALUES (i);

SET i = i + 1;

END WHILE;

END;

WHILE, IF, and REPEAT are statements that depend on a condition. I support them all the same way: by passing "SELECT condition;" to SQL, and then (since such a statement will always return one row with a Boolean value) asking whether the first column in the first row of sqlresult is true.

Result:

function P()

local sqlstate;

local sqlresult = {};

local sqlmessage;

local function sql_execute(statement, parameters)

--[[See earlier description.]]

end;

do

local I; sql_execute([[SELECT 1;

]],{}); I = sqlresult[1][1];

while sql_execute([[

SELECT ? < 5 ;

]],{I}) == true and sqlresult[1][1] == true do

sql_execute([[

INSERT INTO t VALUES (?);

]],{I});

if string.sub(sqlstate,1,2) > '02' then goto end_6; end;

sql_execute([[

SELECT ? + 1;

]],{I})

if string.sub(sqlstate,1,2) > '02' then goto end_6; end;

I = sqlresult[1][1];

end;

end; ::end_6::

end;

We've already seen what the rest of the statements in this loop are supposed to generate, the only new thing is that "SELECT ? < 5;" will return true (because the passed parameter I is less than 5) until I becomes 5 (because "SELECT ? + 1;" is inside the loop).

Design Decision #9: I decided to test first if sql_execute() returns true (that is, does not return an error or not found) before checking whether the value in the result set is true. That's reasonable caution -- but I didn't decide to do a similar test for assignment. That's because a failure during condition evaluation could cause an infinite loop, so it is more serious.

Design Decision #10: I'm saying "SELECT ? < 5;" although I dislike non-standard code -- standard code is "VALUES (? < 5);". This is my concession to what seems to be popular, but I am already regretting it, I probably will change this.

Cursors

CREATE LUA FUNCTION f() RETURNS INTEGER

BEGIN